VLMs are a big leap for vision AI. They reason about vision at a higher level, and they're much easier to use than the previous generation of models. But they've been hard to put into production for three reasons. First, they're slow, and production systems often need real-time decisions. Second, they struggle to run locally, which production systems often require for security, reliability, or cost. Third, they suffer from a "last-mile" problem: the VLM looks promising in the lab, but in the real world, accuracy falls short.

Moondream is a different kind of VLM. It's purpose-built for production systems, and unsurprisingly, we've been tackling these three problems. First, we built small models that are lightweight enough to run everywhere. Then we launched Photon, our inference engine that achieves 20ms inference time on an H100. And today, we're happy to announce the launch of Lens, our fine-tuning product that solves the "last-mile" problem.

Lens in Action: PTZOptics

We've been working with a partner, PTZOptics, who make network-attached remote controlled cameras. In many cases, customers want the camera to act as if it had a smart camera operator controlling it: following the action of a soccer game, zooming in and out at crucial times in a presentation, or detecting anomalies for security or operational reasons.

With Moondream, this is now a reality. You can have the camera track complicated things ("the person in the red shirt"), take inventory of what's shown, or get alerted when actions occur ("someone's hurt"). And with Lens, you can teach Moondream new skills, or tune it when the accuracy is lacking.

Simple API, Pay-as-You-Go

Lens is a simple API that provides fine-tuning, through both reinforcement learning and supervised fine-tuning. There's no hardware to set up or binaries to worry about. Pricing is token based, so you only pay for what you use. See our pricing page for details. We've seen great results with as few as a dozen images.

As soon as you're done improving the model, you can invoke it immediately through our Cloud, or run it locally with Photon. It's the easiest and fastest way to go from fine-tune to production.

See the Difference

Here are a few examples of Lens fine-tunes across very different domains. In each case, the base model struggled, and the fine-tuned model nailed it.

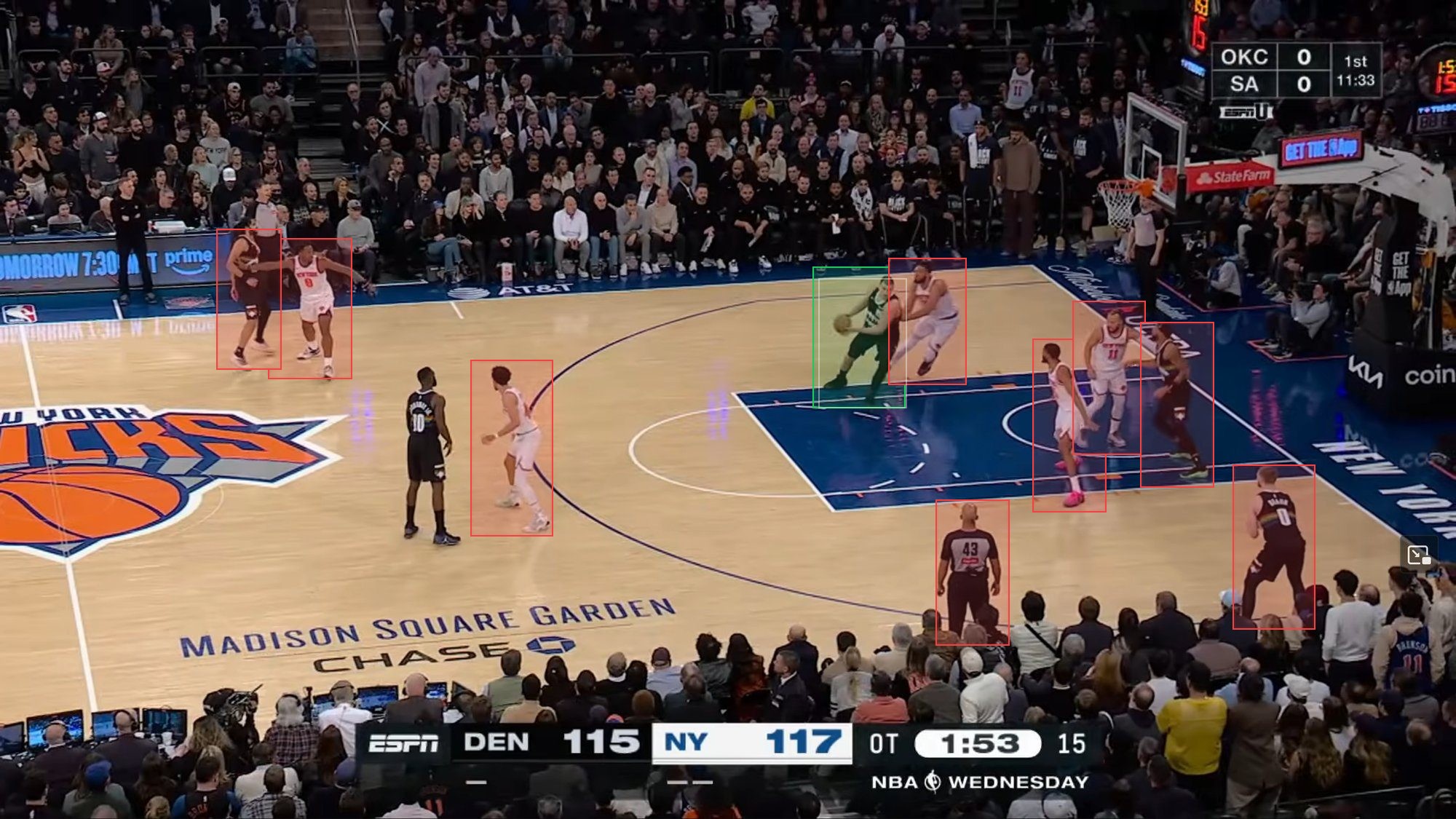

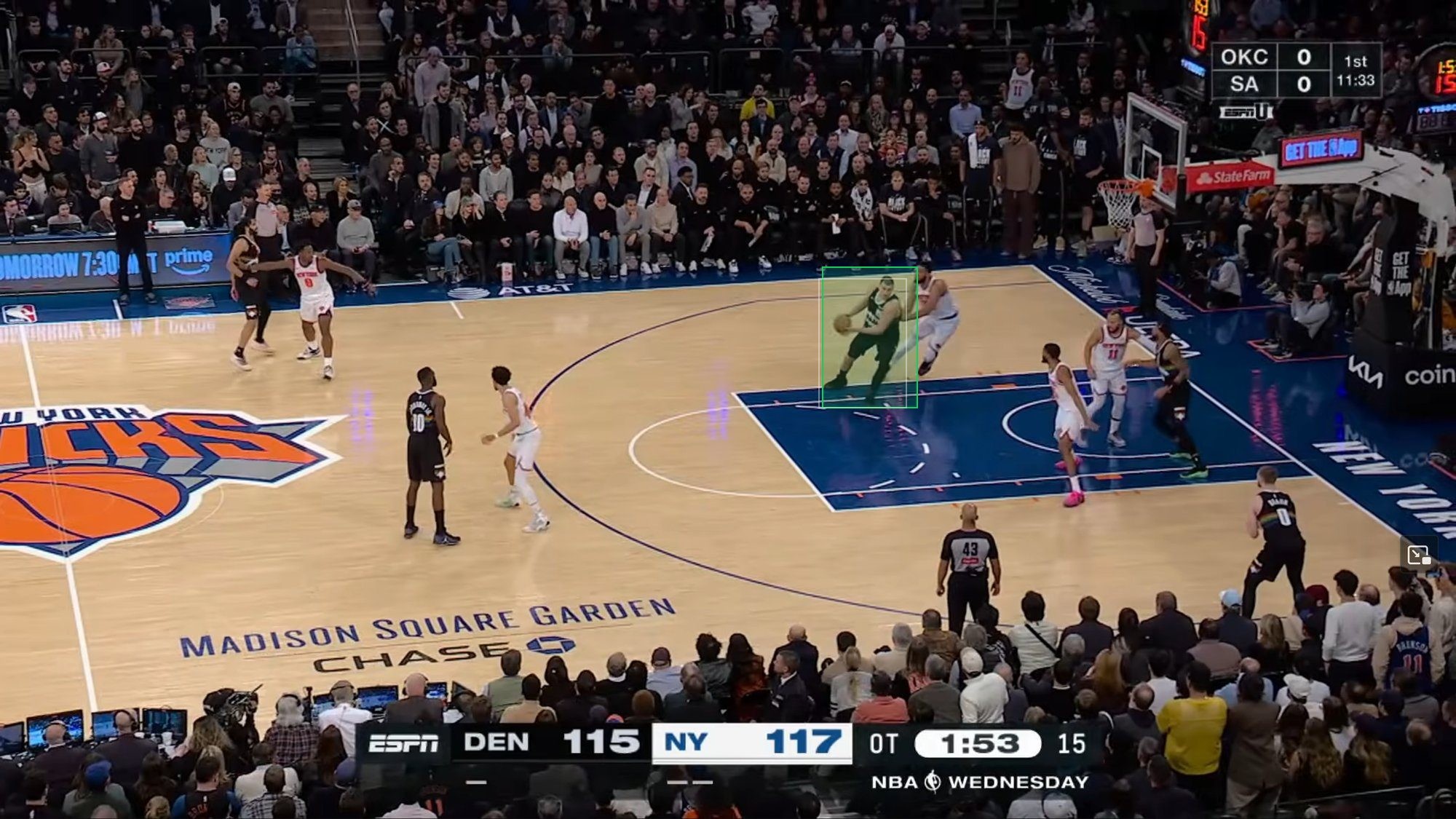

Broadcast Sports: Detecting the Ball Handler

We fine-tuned Moondream to detect the player with the ball in NBA broadcast footage. The base model returned dozens of false positives (red boxes). After fine-tuning with RL, it finds just the ball handler (green box). F1 jumped from 28% to 79%, and false positives dropped from 61 to 2.

Training took 54 minutes and cost $16.89. See the full interactive example →

Geolocation: Country Identification from Street View

We trained Moondream to identify countries from street-view imagery. The base model guesses the wrong continent entirely. After fine-tuning with just 25 images per country, it reads road markings, signage, and landscape cues correctly, beating GPT-5.4's 69.8% accuracy with 71.1%.

Training took 3 hrs 24 min and cost $53.28. See the full interactive example →

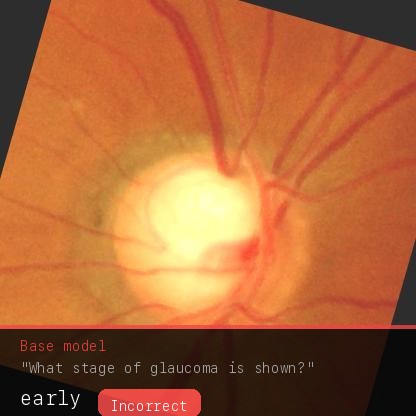

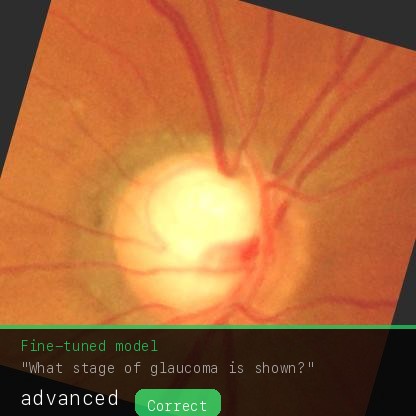

Medical Imaging: Glaucoma Staging

We fine-tuned Moondream to classify retinal images by glaucoma severity. The base model defaulted to "early" for nearly every image. The fine-tuned model distinguishes severity correctly, performing 2x better than GPT-5.4.

Training took 47 minutes and cost $15.68. See the full interactive example →

You can explore all of our fine-tune examples at moondream.ai/p/lens.

Need Help Getting Started?

For customers that are new to vision AI and fine-tuning, we offer help. Our dedicated production team can work with you to deliver a fine-tuned model for your specific use case. Contact sales@moondream.ai for more info.