Glaucoma Detection

Classify retinal fundus images into three glaucoma stages: normal, early, or advanced. The base model achieves only 17.6% accuracy. After 100 RL steps, the fine-tune reaches 69.2%, more than double GPT-5.4's 33.2%.

Accuracy

| Method | RL |

| Steps | 100 |

| Training time | 47 min |

| Cost | $15.68 |

See it in action

Compare the base model against the fine-tuned model across representative benchmark examples.

Prompt

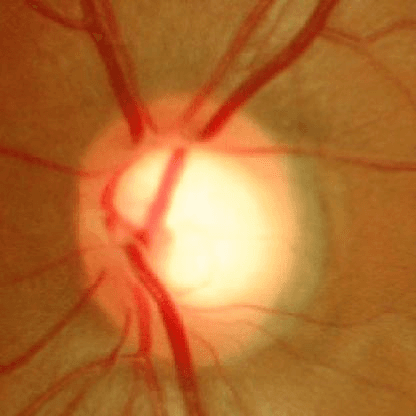

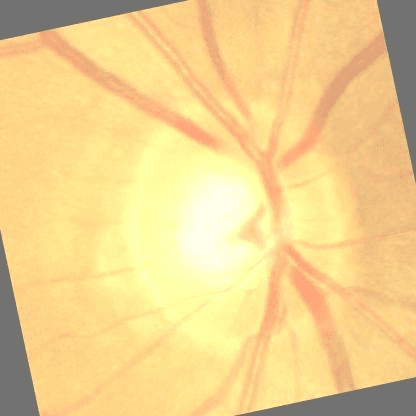

What stage of glaucoma is shown in this retinal image? Respond with one of: normal, early, advanced only.

Advanced glaucoma

Base model

Incorrectearly

Fine-tuned model

Correctadvanced

Normal

Base model

Incorrectearly

Fine-tuned model

Correctnormal

Early glaucoma

Base model

Incorrect(no response)

Fine-tuned model

Correctearly

Perfection in 3 steps

What is fine-tuning?

Moondream starts as a general model trained on broad, public information. Fine-tuning makes it great at one specific task by teaching it the products, documents, categories, or internal information that matter to your business.

Who is this for?

This is for teams putting vision AI into production. If you already know the task and need the model to master that job, fine-tuning is how you get there. It is built for teams that need frontier performance at real-time speed.

See the code

Fine-tuning is just a small API loop: format your data, call `train_step`, and the model updates as you go.

import moondream as md

# Create fine-tune

ft = md.ft(

api_key="your-api-key",

name="Glaucoma Detection",

rank=8,

)

# Hidden boilerplate and data code

requests = (

(

example,

{

"skill": "query",

"image": example["image"],

"question": "What stage of glaucoma is shown in this retinal image? Respond with one of: normal, early, advanced only.",

"num_rollouts": 4,

},

)

for example in training_data

)

for context, response in ft.rollout_stream(requests):

rewards = compute_rewards(context, response)

ft.train_step([{

"mode": "rl",

"request": response["request"],

"rollouts": response["rollouts"],

"rewards": rewards,

}])Frequently asked questions

Ready to take Moondream to production?

Need help? We'll build it for you.

We can help define the task, prepare the data, run training, validate results, and hand off a model your team can use.